Tuesday, 12 December 2017

First steps with Vert.x and Infinispan REST API (Part 1)

Welcome to the first in a multi-part series of blog posts about creating Eclipse Vert.x applications with Infinispan. The purpose of this first tutorial is to showcase how to create a REST API.

All the code of this tutorial is available in this GitHub repository. The backend is a Java project using Maven, so all the needed dependencies can be found in the pom.xml.

==

What is Vert.x ?

Vert.x is a tool-kit for building reactive applications on the JVM. It’s an event driven and non blocking tool-kit. It is based on the Reactor Pattern, like Node.js, but unlike Node it can easily use all the cores of your machine so you can create highly concurrent and performant applications. Code examples can be found in this repository.

==

REST API

Let’s start creating a simple endpoint that will display a welcome message on '/'. In Vert.x this is done by creating a Verticle. A verticle is a unit of deployment and processes incoming events over an event-loop. Event-loops are used in asynchronous programming models. I won’t spend more time here explaining these concepts as this is very well done in this Devoxx Vert.x talk or in the documentation available here.

We need to override the start method, create a 'router' so '/' requests can be handled, and finally create a http server.

The most important thing to remember about vert.x, is that we can NEVER EVER call blocking code (we will see how to deal with blocking API’s just after). If we do so, we will block the event loop and we won’t be able to serve incoming requests properly.

Run the main method, go to your browser to http://localhost:8081/ and we see the welcome message !

Connecting with Infinispan

Now we are going to create a REST API that uses Infinispan. The purpose here is to post and get names by id. We are going to use the default cache in Infinispan for this example, and we will connect to it remotely. To do that, we are going to use the Infinispan hotrod protocol, which is the recommended way to do it (but we could use REST or Memcached protocol too)

Start Infinispan locally

The first thing we are going to do is to run an Infinispan Server locally. We download the Infinispan Server from here, unzip the downloaded file and run ./bin/standalone.sh.

If you are using Docker on Linux, you can use the Ihttps://hub.docker.com/r/jboss/infinispan-server/[nfinispan Docker Image Available] easily. If you are using Docker for Mac, at the time of this writing there is an issue with internal IP addresses and they can’t be called externally. Different workarounds exist to solve the problem, but the easiest thing for this example is simply downloading and running the standalone server locally. We will see how to use the docker image in Openshift just after.

The hotrod server is listening in localhost:11222.

Connect the client to the server

The code we need to connect with Infinispan from our java application is the following :

This code is blocking. As I said before, we can’t block the event loop and this will happen if we directly call these API’s from a verticle. The code must be called using vertx.executeBlocking method, and passing a Handler. The code in the handler will be executed from a worker thread pool and will pass the result back asynchronously. Instead of overriding the start method, we are going to override start(Future<Void> startFuture). This way, we are going to be able to handle errors.

To stop the client, the API supplies a non blocking method that can be called when the verticle is stopped, so we are safe on that.

We are going to create an abstract CacheAccessVerticle where we will initialise the manager and get default cache. When everything is correct and the defautCache variable is initialised, we will log a message and execute the initSuccess abstract method.

REST API to create names

We are going to add 3 new endpoints.

-

GET /api displays the API name

-

POST /api/cutenames creates a new name

-

GET /api/cutenames/id displays a name by id

CuteNamesRestAPI verticle can now extend this class and override the initSuccess method instead of the start method.

POST

Our goal is to use a curl to create a name like this :

curl -X POST \ -H "Content-Type: application/json" \ -d '\{"name":"Oihana"}' "http://localhost:8081/api/cutenames"

For those that are not familiar with basques names, Oihana means 'rainforest' and is a super cute name. Those who know me will confirm that I’m absolutely not biased making this statement.

To read the body content, we need to add a body handler to the route, otherwise the body won’t be parsed. This is done by calling router.route().handler(BodyHandler.create()).

The handler that will handle the post method in '/api/cutenames' is a RoutingContext handler. We want to create a new name in the default cache. For that, we will call putAsync method from the defaultCache.

The server responds 201 when the name is correctly created, and 400 when the request is not correct.

GET by id

To create a get endpoint by id, we need to declare a route that will take a parameter :id. In the route handler, we are going to call getAsync method.

If we run the main, we can POST and GET names using curl !

curl -X POST -H "Content-Type: application/json" \ -d '\{"id":"42", "name":"Oihana"}' \ "http://localhost:8081/api/cutenames"

Cute name added

curl -X GET -H "Content-Type: application/json" \ "http://localhost:8081/api/cutenames/42" * \{"name":"Oihana"}*

==

Wrap up

We have learned how to create a REST API with Vert.x, powered by Infinispan. The repository has some unit tests using the web client. Feedback is more than welcome to improve the code and the provided examples. I hope you enjoyed this tutorial ! On the next tutorial you will learn how to create a PUSH API.

Tags: vert.x rest API

Monday, 19 June 2017

Cache operations impersonation: do as I say (or maybe as she says)

The implementation of cache authorization in Infinispan has traditionally followed the JAAS model of wrapping calls in a PrivilegedAction invoked through Subject.doAs(). This led to the following cumbersome pattern:

We also provided an implementation which, instead of relying on enabling the SecurityManager, could use a lighter and faster ThreadLocal for storing the Subject:

While this solves the performance issue, it still leads to unreadable code. This is why, in Infinispan 9.1 we have introduced a new way to perform authorization on caches:

Obviously, for multiple invocations, you can hold on to the "impersonated" cache and reuse it:

We hope this will make your life simpler and your code more readable !

Tags: security API

Monday, 12 October 2015

Functional Map API: Listeners

We continue with the blog series on the experimental Functional Map API which was released as part of Infinispan 8.0.0.Final. In this blog post we’ll be focusing on how to listen for Functional Map events. For reference, here are the previous entries in the series:

The first thing to notice about Functional Map listeners is that they only send events post-event, so that means the events are received after the event has happened. In contrast with Infinispan Cache listeners, there are no pre-event listener invocations. The reason pre-events are not available is because listeners are meant to be an opportunity to find out what has happened, and having pre-events can sometimes hint as if the listener was able to alter the execution of the operation, for which the listener is not really suited. If interested in pre-events or potentially altering the execution, plugging custom interceptors is the recommended solution.

Functional Map offers two type of event listeners: write-only operation listeners and read-write operation listeners.

Write-Only Listeners

Write listeners enable users to register listeners for any cache entry write events that happen in either a read-write or write-only functional map.

Listeners for write events cannot distinguish between cache entry created and cache entry modify/update events because they don’t have access to the previous value. All they know is that a new non-null entry has been written. However, write event listeners can distinguish between entry removals and cache entry create/modify-update events because they can query what the new entry’s value via ReadEntryView.find() method.

Adding a write listener is done via the WriteListeners interface which is accessible via both ReadWriteMap.listeners() and WriteOnlyMap.listeners() method. A write listener implementation can be defined either passing a function to onWrite(Consumer<ReadEntryView<K, V>>) method, or passing a WriteListener implementation to add(WriteListener<K, V>) method. Either way, all these methods return an AutoCloseable instance that can be used to de-register the function listener. Example and expected output:

Read-Write Listeners

Read-write listeners enable users to register listeners for cache entry created, modified and removed events, and also register listeners for any cache entry write events. Entry created, modified and removed events can only be fired when these originate on a read-write functional map, since this is the only one that guarantees that the previous value has been read, and hence the differentiation between create, modified and removed can be fully guaranteed.

Adding a read-write listener is done via the ReadWriteListeners interface which is accessible via ReadWriteMap.listeners() method. If interested in only one of the event types, the simplest way to add a listener is to pass a function to either onCreate, onModify or onRemove methods. Otherwise, if interested in multiple type of events, passing a ReadWriteListener implementation via add(ReadWriteListener<K, V>) is the easiest. As with write-listeners, all these methods return an AutoCloseable instance that can be used to de-register the listener.

Here’s an example of adding a ReadWriteListener that handles multiple type of events:

Closing Notes

More listener event types are yet to be implemented for Functional API, such as expiration events or passivation/activation events. We are capturing this future work and other improvements under the ISPN-5704 issue.

We’d love to hear from you on how you are finding this new API. To provide feedback or report any problems with it, head to our user forums and create a post there.

In next blog post in the series, we’ll be looking into how to pass per-invocation parameters to tweak operations.

Cheers,

Galder

Tags: functional listeners API

Tuesday, 08 September 2015

Functional Map API: Working with multiple entries

We continue with the blog series on the experimental Functional Map API which was released as part of Infinispan 8.0.0.Final. In this blog post we’ll be focusing on how to work with multiple entries at the same time. For reference, here are the previous entries in the series:

The approach taken by the Functional Map API when working with multiple keys is to provide a lazy, pull-style API. All multi-key operations take a collection parameter which indicates the keys to work with (and sometimes contain value information too), and a function to execute for each key/value pair. Each function’s ability depends on the entry view received as function parameter, which changes depending on the underlying map: ReadEntryView for ReadOnlyMap, WriteEntryView for WriteOnlyMap, or ReadWriteView for ReadWriteMap. The return type for all multi-key operations, except the ones from WriteOnlyMap, return an instance of Traversable which exposes methods for working with the returned data from each function execution. Let’s see an example:

This example demonstrates some of the key aspects of working with multiple entries using the Functional Map API:

-

As explained in the previous blog post, all data-handling methods (including multi-key methods) for WriteOnlyMap return CompletableFuture<Void>, because there’s nothing the function can provide that could not be computed in advance or outside the function.

-

Normally, the order of the Traversable matches the order of the input collection though this is not currently guaranteed.

There is a special type of multi-key operations which work on all keys/entries stored in Infinispan. The behaviour is very similar to the multi-key operations shown above, with the exception that they do not take a collection of keys (and/or values) as parameters:

There’s a few interesting things to note about working with all entries using the Functional Map API:

-

When working with all entries, the order of the Traversable is not guaranteed.

-

Read-only’s keys() and entries() offer the possibility to traverse all keys and entries present in the cache. When traversing entries, both keys and values including metadata are available. Contrary to Java’s ConcurrentMap, there’s no possibility to navigate only the values (and metadata) since there’s little to be gained from such method and once a key’s entry has been retrieved, there’s no extra cost to provide the key as well.

It’s worth noting that when we sat down to think about how to work with multiple entries, we considered having a push-style API where the user would receive callbacks pushed as the entries to work with were located. This is the approach that reactive APIs such as Rx follow, but we decided against using such APIs at this level for several reasons:

-

We have huge interest in providing a Rx-style API for Infinispan, but we didn’t want the core API to have a dependency on Rx or Reactive Streams.

-

We didn’t want to reimplement a push-style async API since this is not trivial to do and requires careful thinking, specially around back-pressure and flow control.

-

Push-style APIs require more work on the user side compared to pull-style APIs.

-

Pull-style APIs can still be lazy and partly asynchronous since the user can decide to work with the Traversable at a later stage, and the separation between intermediate and terminating operations provides a good abstraction to avoid unnecessary computation.

In fact, it is this desire to keep a clear separation between intermediate and terminating operations at Traversable that has resulted in having no manual way to iterate over the Traversable. In other words, there is no iterator() nor spliterator() methods in Traversable since these are often associated with manual, user-end iteration, and we want to avoid such thing since in the majority of cases, Infinispan knows best how to exactly iterate over the data.

In the next blog post, we’ll be looking at how to work with listeners using the Functional Map API.

Cheers, Galder

Tags: functional API lambda

Monday, 07 September 2015

Distributed Streams

Now that Infinispan supports Java 8, we can take full advantage of some of the new features. One of the big features of Java 8 is the new Stream classes. This flips the head on processing data so that instead of having to iterate upon the data yourself the underlying Stream handles that and you just provide the operations to perform on it. This lends itself great to distributed processing as the iteration is handled entirely by the implementation (in this case Infinispan).

I therefore am glad to introduce for Infinispan 8, the feature Distributed Streams! This allows for any operation you can perform on a regular Stream to also be performed on a Distributed cache (assuming the operation and data is marshallable).

Marshallability

When using a distributed or replicated cache, the keys and values of the cache must be marshallable. This is the same case for intermediate and terminal operations when using the distributed streams. Normally you would have to provide an instance of some new class that is either Serializable or has an Externalizer registered for it as described in the marshallable section of the user guide.

However, Java 8 also introduced lambdas, which can be defined as serializable very easily (although it is a bit awkward). An example of this serialization can be found here.

Some of you may also be aware of the Collectors class which is used with the collect method on a stream. Unfortunately, all of the Collectors produced are not able to be marshalled. As such, Infinispan has added a utility class that can work in conjunction with the Collectors class. This allows you to still use any combination of the Collectors classes and still work properly when everything is required to be marshalled.

Parallelism

Java 8 streams naturally have a sense of parallelism. That is that the stream can be marked as being parallel. This in turn allows for the operations to be performed in parallel using multiple threads. The best part is how simple it is to do. The stream can be made parallel when first retrieving it by invoking parallelStream or you can optionally enable it after the Stream is retrieved by just invoking parallel.

The new Distributed streams from Infinispan take this one step further, which I am calling parallel distribution. That is that since data is already partitioned across nodes we can also allow operations to be ran simultaneously on different nodes at the same time. This option is enabled by default. However this can be controlled by using the new CacheStream interface discussed just below. Also, to be clear, the Java 8 parallel can be used in conjunction with parallel distribution. This just means you will have concurrent operations running on multiple nodes across multiple threads on each node.

CacheStream interface

There is a new interface Cachestream provided that allows for controlling additional options when using a Distributed Stream. I am highlighting the added methods (note comments have been removed from gist)

distributedBatchSize

This method controls how many elements are brought back at one time for operations that are key aware. These operations are (spl)iterator and forEach. This is useful to tweak how many keys are held in memory from a remote node. Thus it is a tradeoff of performance (more keys) versus memory. This defaults to the chunk size as configured by state transfer.

parallelDistribution / sequentialDistribution

This was discussed in the parallelism section above. Note that all commands have this enabled by default except for spl(iterator) methods.

filterKeys

This method can be used to have the distributed stream only operate on a given set of keys. This is done in a very efficient way as it will only perform the operation on node(s) that own the given keys. Using a given set of keys also allows for constant access time from the data container/store as the cache doesn’t have to look at every single entry in the cache.

filterKeySegments (advanced users only)

This is useful to do filtering of instances in a more performant way. Normally, you could use the filter intermediate operation, but this method is performed before any of the operations are performed to most efficiently limit the entries that are presented for stream processing. For example, if only a subset of segments are required, it may not have to send a remote request.

segmentCompletionListener (advanced users only)

Similar to the previous method, this is related to key segments. This listener allows for the end user to be notified when a segment has been completed for processing. This can be useful if you want to keep track of completion and if this node goes down, you can rerun the processing with only the unprocessed segments. Currently, this listener is only supported for spl(iterator) methods.

disableRehashAware (advanced users only)

By default, all stream operations are what is called rehash aware. That is if a node joins or leaves the cluster while the operation is in progress the cluster will be aware of this and ensure that all data is processed properly with no loss (assuming no data was actually lost).

This can be disabled by calling disableRehashAware; however, if a rehash is to occur in the middle of the operation, it is possible that all data may not be processed. It should be noted that data is not processed multiple times with this disabled, only a loss of data can occur.

This option is not normally recommended unless you have a situation where you can afford to only operate on a subset of data. The tradeoff is that the operation can perform faster, especially (spl)iterator and forEach methods.

Map/Reduce

The age old example of map/reduce is always word count. Streams allow you to do that as well! Here is an equivalent word count example assuming you have a Cache containing String keys and values and you want the count of all words in the values. Some of you may be wondering how this relates to our existing map/reduce framework. The plan is to deprecate the existing Map/Reduce and replace it completely with the new distributed streams at a later point.

Remember though that distributed streams can do so much more than just map/reduce. And there are a lot of examples already out there for streams. To use the distributed streams, you just need to make sure your operations are marshallable, and you are good to go.

Here are a few pages with examples of how to use streams straight from Oracle:

http://www.oracle.com/technetwork/articles/java/ma14-java-se-8-streams-2177646.html http://www.oracle.com/technetwork/articles/java/architect-streams-pt2-2227132.html

I hope you enjoy Distributed Streams. We hope they change how you interact with your data in the cluster!

Let us know what you think, any issues or usages you would love to share!

Cheers,

Will

Tags: java 8 streams API

Wednesday, 02 September 2015

Functional Map API: Working with single entries

In this blog post we’ll continue with the introduction of the experimental Functional Map API, which was released as part of Infinispan 8.0.0.Final, focusing on how to manipulate data using single-key operations.

As mentioned in the Functional Map API introduction, there are three types of operations that can be executed against a functional map: read-only operations (executed via ReadOnlyMap), write-only operations (executed via WriteOnlyMap), and read-write operations (executed via ReadWriteMap) and .

Firstly, we need construct instances of ReadOnlyMap, WriteOnlyMap and ReadWriteMap to be able to work with them:

Next, let’s see all three types of operations in action, chaining them to store a single key/value pair along with some metadata, then read it and finally delete a returning the previously stored data:

This example demonstrates some of the key aspects of working with single entries using the Functional Map API:

-

Single entry methods are asynchronous returning CompletableFuture instances which provide methods to compose and chain operations so that it can feel is they’re being executed sequentially. Unfortunately Java does not have Haskell’s do notation or Scala’s for comprehensions to make it more palatable, but it’s great news that Java finally offers mechanisms to work with CompletableFutures in a non-blocking way, even if they’re a bit more verbose than what’s proposed in other languages.

-

All data-handling methods for WriteOnlyMap return CompletableFuture<Void>, meaning that the user can find out when the operation has completed but nothing else, because there’s nothing the function can provide that could not be computed in advance or outside the function.

-

The return type for most of the data handling methods in ReadOnlyMap (and ReadWriteMap) are quite flexible. So, a function can decide to return value information, or metadata, or for convenience, it can also return the ReadEntryView it receives as parameter. This can be useful for users wanting to return both value and metadata parameter information.

-

The read-write operation demonstrated above showed how to remove an entry and return the previously associated value. In this particular case, we know there’s a value associated with the entry and hence we called ReadEntryView.get() directly, but if we were not sure if the value is present or not, ReadEntryView.find() should be called and return the Optional instance instead.

-

In the example, Lifespan metadata parameter is constructed using the new Java Time API available in Java 8, but it could have been done equally with java.util.concurrent.TimeUnit as long as the conversion was done to number of milliseconds during which the entry should be accessible.

-

Lifespan-based expiration works just as it does with other Infinispan APIs, so you can easily modify the example to lower the lifespan, wait for duration to pass and then verify that the value is not present any more.

If storing a constant value, WriteOnlyMap.eval(K, Consumer) could be used instead of WriteOnlyMap.eval(K, V, Consumer), making the code clearer, but if the value is variable, WriteOnlyMap.eval(K, V, Consumer) should be used to avoid, as much as possible, functions capturing external variables. Clearly, operations exposed by functional map can’t cover all scenarios and there might be situations where external variables are captured by functions, but these should in general, should be a minority. Here is as example showing how to implement ConcurrentMap.replace(K, V, V) where external variable capturing is required:

The reason we didn’t add a WriteOnly.eval(K, V, V, Consumer) to the API is because value-equality-based replace comparisons are just one type of replace operations that could be executed. In other cases, metadata parameter based comparison might be more suitable, e.g. Hot Rod replace operation where version (a type of metadata parameter) equality is the deciding factor to determine whether the replace should happen or not.

In the next blog post, we’ll be looking at how to work with multiple entries using the Functional Map API.

Cheers,

Galder

Tags: functional introduction API lambda

Friday, 21 August 2015

New Functional Map API in Infinispan 8 - Introduction

In Infinispan 8.0.0.Beta3, we have a introduced a new experimental API for interacting with your data which takes advantage of the functional programming additions and improved asynchronous programming capabilities available in Java 8.

Over the next few weeks we’ll be introducing different aspects of the API. In this first blog post, we’ll focus on why we felt there’s a need for a new approach, answering a few key questions.

ConcurrentMap and JCache

Map-like key/value pair APIs have often been used for distributed caching and in-memory data grids. Initially, ConcurrentMap became popular but this was designed to be run within a single JVM, and hence some of the operations suffered in distributed environments or when persistence stores were attached. For example, methods such as 'https://docs.oracle.com/javase/8/docs/api/java/util/Map.html#put-K-V-[V put(K, V)]', 'https://docs.oracle.com/javase/8/docs/api/java/util/concurrent/ConcurrentMap.html#putIfAbsent-K-V-[V putIfAbsent(K, V)]', 'https://docs.oracle.com/javase/8/docs/api/java/util/concurrent/ConcurrentMap.html#replace-K-V-[V replace(K, V)]' would force implementations to return the previous value, but often this value is not needed yet this could be expensive to transfer.

JSR-107 set out to improve on this and came up with the JCache specification which solved this particular problem separating operations such ConcurrentMap’s 'https://docs.oracle.com/javase/8/docs/api/java/util/Map.html#put-K-V-[V put(K, V)]' into two operations: 'https://github.com/jsr107/jsr107spec/blob/v1.0.0/src/main/java/javax/cache/Cache.java#L194[void put(K, V)]' and 'V getAndPut(K, V)', and it applied the same logic to other operations such as 'replace' by providing an alternative 'getAndReplace(K, V)'… etc.

However, even though JCache was designed with distributed caching in mind, it still failed to provide an API to execute operations asynchronously and hence avoid resource underutilization by having threads waiting for remote operations to complete. 'loadAll' is probably the only exception, and it would have been the perfect candidate to return a Future or similar construct, but having to pass in a completion listener feels a bit clunky and cannot be chained easily.

In my opinion, the best parts of JCache are 'invoke' and 'https://github.com/jsr107/jsr107spec/blob/v1.0.0/src/main/java/javax/cache/Cache.java#L599[invokeAll]' methods. When you look at them, you see a lot of potential to reimplement get, put, getAndPut, getAndReplace, putAll, getAll, and many others using these methods. In other words, as an implementer, all you should need to implement is those two functions, and the rest would be syntactic sugar for the user. Unfortunately, the way 'invoke' and 'invokeAll' handle arguments is a bit clunky, and really, it’s just screaming for lambdas to be passed in and CompletableFuture instances to be returned (Java 8!).

So, when Infinispan moved to Java 8, we decided to revisit these concepts and see if we could come up with a better, distilled map-like interface to be used for as either a caching or data grid API.

New Functional Map API

Infinispan’s Functional Map API is a distilled maplike asynchronous API which uses lambdas to interact with data.

Asynchronous and Lazy

Being an asynchronous API, all methods that return a single result, return a CompletableFuture which wraps the result, so you can use the resources of your system more efficiently by having the possibility to receive callbacks when the CompletableFuture has completed, or you can chain or compose them with other CompletableFuture. If you do want to block the thread and wait for the result, just as it happens with a ConcurrentMap or JCache method call, you can simply call CompletableFuture.get() (for such situations, we are working on finding ways to avoid unnecessary thread creation when the caller will block on the CompletableFuture).

For those operations that return multiple results, the API returns instances of a https://github.com/infinispan/infinispan/blob/master/commons/src/main/java/org/infinispan/commons/api/functional/Traversable.java[Traversable] interface which offers a lazy pull-style API for working with multiple results. Although push-style interfaces for handling multiple results, such as RxJava, are fully asynchronous, they’re harder to use from a user’s perspective. Traversable, being a lazy pull-style API, can still be asynchronous underneath since the user can decide to work on the traversable at a later stage, and the Traversable implementation itself can decide when to compute those results.

Lambda transparency

Since the content of the lambdas is transparent to Infinispan, the API has been split into 3 interfaces for read-only (ReadOnlyMap), read-write (ReadWriteMap) and write-only (WriteOnlyMap) operations respectively, in order to provide hints to the Infinispan internals on the type of work needed to support lambdas.

For example, Infinispan has been designed in such way that our 'ConcurrentMap.get()' and 'JCache.getAll()' implementations do not require locks to be acquired. These get()/getAll() operations are read-only operations, and hence if you call our functional map ReadOnlyMap’s 'eval()' or 'evalMany()' operations, you get the same benefit. A key advantage of ReadOnlyMap’s 'eval()' and 'evalMany()' operations is that they take lambdas as parameters which means the returned types are more flexible, so we can return a value associated with the key, or we can return a boolean if a value has the expected contents, or we can return some metadata parameters from it, e.g. last accessed time, last modified time, creation time, lifespan, version information…etc.

Another important hint that is required to make efficient use of the system is to know when a write-only operation is being executed. Write-only operations require locks to be acquired and as demonstrated by JCache’s 'https://github.com/jsr107/jsr107spec/blob/v1.0.0/src/main/java/javax/cache/Cache.java#L505[void removeAll()]' and `void put(K, V)' or ConcurrentMap’s 'https://docs.oracle.com/javase/8/docs/api/java/util/Map.html#putAll-java.util.Map-[putAll()]', they do not require the previous value to be queried or read, which as explained above is a very important optimization since reading the previous value might require the persistence layer or a remote node to be queried. WriteOnlyMap’s 'https://github.com/infinispan/infinispan/blob/master/commons/src/main/java/org/infinispan/commons/api/functional/FunctionalMap.java#L281[eval()]', 'https://github.com/infinispan/infinispan/blob/master/commons/src/main/java/org/infinispan/commons/api/functional/FunctionalMap.java#L351[evalMany()]', and 'https://github.com/infinispan/infinispan/blob/master/commons/src/main/java/org/infinispan/commons/api/functional/FunctionalMap.java#L414[evalAll()]' follow this same pattern with the added flexibility for the lambda to decide what kind of write operation to execute.

The final type of operations we have are read-write operations, and within this category we find CAS-like (Compare-And-Swap) operations. This type of operations require previous value associated with the key to be read and for locks to be acquired before executing the lambda. Most of the operations in ConcurrentMap and JCache operations fall within this domain including: 'V put(K, V)', 'https://github.com/jsr107/jsr107spec/blob/v1.0.0/src/main/java/javax/cache/Cache.java#L283[boolean putIfAbsent(K, V)]', 'V replace(K, V)', 'boolean replace(K, V, V)'…etc. ReadWriteMap’s 'eval()', 'evalMany()' and 'evalAll()' provide a way to implement the vast majority of these operations thanks to the flexibility of the lambdas passed in. So you can make CAS-like comparisons not only based on value equality but based on metadata parameter equality such as version information, and you can send back previous value or boolean instances to signal whether the CAS-like comparison succeeded.

$DEITY, I need to learn a new API!!!

This new functional Map-like API is meant to complement existing Key/Value Infinispan API offerings, so you’ll still be able to use ConcurrentMap or JCache standard APIs if that’s what suits your use case best.

The target audience for this new API is either:

-

Distributed or persistent caching/in-memory data-grid users that want to benefit from CompletableFuture and/or Traversable for async/lazy data grid or caching data manipulation. The clear advantage here is that threads do not need to be idle waiting for remote operations to complete, but instead these can be notified when remote operations complete and then chain them with other subsequent operations.

-

Users wanting to go beyond the standard operations exposed by ConcurrentMap and JCache, for example, if you want to do a replace operation using metadata parameter equality instead of value equality, or if you want to retrieve metadata information from values…etc.

Internally, we feel that this new functional Map-like API distills the Map-like APIs that we currently offer (including ConcurrentMap and JCache) and gets rid of a lot of duplication in our AdvancedCache API (e.g. 'https://docs.jboss.org/infinispan/8.0/apidocs/org/infinispan/AdvancedCache.html#getCacheEntry-java.lang.Object-[getCacheEntry()]', 'https://docs.jboss.org/infinispan/8.0/apidocs/org/infinispan/commons/api/AsyncCache.html#getAsync-K-[getAsync()]', 'https://docs.jboss.org/infinispan/8.0/apidocs/org/infinispan/commons/api/AsyncCache.html#putAsync-K-V-[putAsync()]', 'put(K, V, Metadata)'…etc), and hence down the line, we’d want all these APIs to be implemented using the new functional Maplike API. By doing that, we hope to reduce the number of commands that our internal architecture implements, hence reducing our code base.

This new API also offers a new approach for passing per-invocation parameters, and much more flexible Metadata handling compared to our current approach. As we dig into this new API in next blog posts, we’ll explain the differences and advantages provided by these.

Functional Map API usage examples

To give you a little taste of what the API looks like, here is a write-only operation to associate a key with a value, whose CompletableFuture has been chained so that when it completes, a read-only operation can be executed to read the stored value, and when that completes, print it to the system output:

You can find more examples of this new API in FunctionalConcurrentMap and FunctionalJCache classes, which are implementations of ConcurrentMap and JCache respectively using the new Functional Map API.

Tell me more!!

Over the next few weeks I’ll be posting examples looking at the finer details of these new Functional Map APIs, but if you’re eager to get started, check the classes in org.infinispan.functional package, FunctionalConcurrentMap and FunctionalJCache which are ConcurrentMap and JCache implementations based on these Functional Map APIs, and FunctionalMapTest which demonstrates operations that go beyond what ConcurrentMap and JCache offer.

Happy (functional) hacking :)

Galder

Tags: functional introduction API lambda

Monday, 10 August 2015

Infinispan 8.0.0.Beta3 out with Lucene 5, Functional API, Templates...etc

Infinispan 8.0.0.Beta3 is out with a lot of new bells and whistles including:

-

Infinispan querying has been upgraded to be usable with Lucene 5, with a lot of improvements particularly in terms of memory efficiency. With this upgrade, Lucene 4 support has been removed from the community project.

-

Configuration templates are finally here, meaning that users can now define cache configurations as template configurations, and then create cache configurations using those templates as base configuration.

-

A brand new, experimental, FunctionalMap API has been added that takes advantage of the lambda and async programming improvements introduced in Java 8. We consider this an advanced API that will enable Infinispan to grow beyond the well known javax.cache.Cache and java.util.concurrent.ConcurrentMap APIs. In the next few days I’ll be posting mutiple, detailed, blog posts looking at the different aspects of the API. If you area eager to get started, you can first have a look at a ConcurrentMap implementation using FunctionalMap to get a feel for it. Your feedback is highly appreciated!

-

Pedro has completed ISPN-2849 which should provide much better performance by liberating precious JGroups threads from having to wait for locks to be acquired remotely.

To get more details, check our release notes and go to our downloads page to find out how to get started with this Infinispan version.

Happy hacking :)

Galder

Tags: release API

Monday, 16 September 2013

New persistence API in Infinispan 6.0.0.Alpha4

The existing CacheLoader/CacheStore API has been around since Infinispan 4.0. In this release of Infinispan we’ve taken a major step forward in both simplifying the integration with persistence and opening the door for some pretty significant performance improvements.

What’s new

So here’s what the new persistence integration brings to the table:

-

alignment with JSR-107: now we have a CacheWriter and CacheLoader interface similar to the the loader and writer in JSR 107, which should considerably help writing portable stores across JCache compliant vendors

-

simplified transaction integration: all the locking is now handled within the Infinispan layer, so implementors don’t have to be concerned coordinating concurrent access to the store (old LockSupportCacheStore is dropped for that reason).

-

parallel iteration: it is now possible to iterate over entries in the store with multiple threads in parallel. Map/Reduce tasks immediately benefit from this, as the map/reduce tasks now run in parallel over both the nodes in the cluster and within the same node (multiple threads)

-

reduced serialization (translated in less CPU usage): the new API allows exposing the stored entries in serialized format. If an entry is fetched from persistent storage for the sole purpose of being sent remotely, we no longer need to deserialize it (when reading from the store) and serialize it back (when writing to the wire). Now we can write to the wire the serialized format as read fro the storage directly

API

Now let’s take a look at the API in more detail:

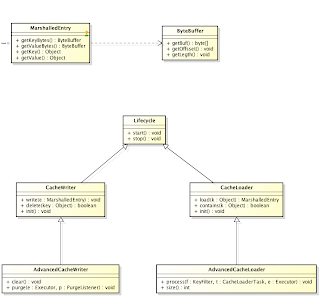

The diagram above shows the main classes in the API:

-

-

abstracts the serialized form on an object

-

-

MarshalledEntry - abstracts the information held within a persistent store corresponding to a key-value added to the cache. Provides method for reading this information both in serialized (ByteBuffer) and deserialized (Object) format. Normally data read from the store is kept in serialized format and lazily deserialized on demand, within the MarshalledEntry implementation

-

CacheWriter and CacheLoader provide basic methods for reading and writing to a store

-

AdvancedCacheLoader and AdvancedCacheWriter provide operations to manipulate the underlaying storage in bulk: parallel iteration and purging of expired entries, clear and size.

A provider might choose to only implement a subset of these interfaces:

-

Not implementing the AdvancedCacheWriter makes the given writer not usable for purging expired entries or clear

-

Not implementing the AdvancedCacheLoader makes the information stored in the given loader not used for preloading, nor for the map/reduce iteration

If you’re looking at migrating your existing store to the new API, looking at the SingleFileStore for inspiration can be of great help.

Configuration

And finally, the way the stores are configured has changed:

-

the 5.x loaders element is now replaced with persistence

-

both the loaders and writers are configured through a unique store element (vs loader and store, as allowed in 5.x)

-

the preload and shared attributes are configured at each individual store, giving more flexibility when it comes to configuring multiple chained stores

Cheers,

Mircea

Tags: persistence jsr 107 loader store performance API

Wednesday, 09 November 2011

More locking improvements in Infinispan 5.1.0.BETA4

The latest beta in the Infinispan 5.1 "Brahma" series is out. So, what’s in Infinispan 5.1.0.BETA4? Here are the highlights:

-

A hugely important lock acquisition improvement has been implemented that results in locks being acquired in only a single node in the cluster. This means that deadlocks as a result of multiple nodes updating the same key are no longer possible. Concurrent updates on a single key will now be queued in the node that 'owns' that key. For more info, please check the design wiki and keep an eye on this blog because Mircea Markus, who’s the author of this enhancement, will be explaining it in more detail very shortly. Please note that you don’t need to make any configuration or code changes to take advantage of this improvement.

-

A bunch of classes and interfaces in the core/ module have been migrated to an api/ and commons/ module in order to reduce the size of the dependencies that the Hot Rod Java client had. As a result, there’s been a change in the hierarchy of Cache and CacheContainer classes, with the introduction of BasicCache and BasicCacheContainer, which are parent classes of existing Cache and CacheContainer classes respectively. What’s important is that Hot Rod clients must now code againts BasicCache and BasicCacheContainers rather than Cache and CacheContainer. So previous code that was written like this will no longer compile:

import org.infinispan.Cache; import org.infinispan.manager.CacheContainer; import org.infinispan.client.hotrod.RemoteCacheManager; ... CacheContainer cacheContainer = new RemoteCacheManager(); Cache cache = cacheContainer.getCache();

Instead, if Hot Rod clients want to continue using interfaces higher up the hierarchy from the remote cache/container classes, they’ll have to write:

import org.infinispan.BasicCache;

import org.infinispan.manager.BasicCacheContainer;

import org.infinispan.client.hotrod.RemoteCacheManager;

...

BasicCacheContainer cacheContainer = new RemoteCacheManager();

BasicCache cache = cacheContainer.getCache();Previous code that interacted against the RemoteCache and RemoteCacheManager should work as it used to:

import org.infinispan.client.hotrod.RemoteCache;

import org.infinispan.client.hotrod.RemoteCacheManager;

...

RemoteCacheManager cacheContainer = new RemoteCacheManager();

RemoteCache cache = cacheContainer.getCache();We apologise for any inconvenience caused, but we think that the Hot Rod clients will hugely benefit from this vastly reducing the number of dependencies they need. * Finally, a few words about the ZIP distribution file. In BETA4 we’ve added some cache store implementations that were missing from previous releases, such as the RemoteCacheStore that talks to Hot Rod servers, and we’ve added a brand new demo application that implements a near-caching pattern using JMS. Please be aware that this demo is just a simple prototype of how near caches could be built using Infinispan and HornetQ.

As always, please keep the feedback coming. You can download the release from here and you get further details on the issues addressed in the changelog.

Cheers, Galder

Tags: locking API